Evolving global regulations are reshaping AI infrastructure decisions. Find out why keeping data under your control is key to building compliant, auditable, and high-performing AI systems.

AI is moving quickly from experimentation into production environments, and that shift is bringing new scrutiny. As organizations build and deploy AI models, questions about where data lives, how it is processed, and who can access it are becoming central to every decision. At the same time, regulations continue to evolve, placing greater responsibility on enterprises to demonstrate control and accountability.

For IT, security, and compliance leaders, this is an issue that requires clarity. AI initiatives can no longer be separated from data governance strategies. Infrastructure choices now play a direct role in regulatory posture, and the organizations that get this right will be able to innovate with confidence rather than hesitation.

AI data sovereignty concerns apply not only to training data, but also to inference inputs, outputs, prompts, retrieved content logs, and other data used by AI systems. As global laws evolve, one way to reduce sovereignty complexity is to keep your AI workloads on-premises. This can help you maintain jurisdictional compliance, strengthen auditability, and reduce regulatory risk.

Why data sovereignty matters for AI

At its core, data sovereignty is about control. It refers to the principle that data is governed by the laws and regulations of the country or region where it is stored and processed. For AI workloads, this becomes especially important because models are only as compliant as the data they are trained on.

AI systems often rely on large, diverse datasets that may include sensitive information. This could range from customer records and financial data to proprietary intellectual property. When that data crosses borders or is processed in environments outside of defined jurisdictions, organizations may lose visibility into how it is handled and whether it complies with local regulations.

The complexity increases as AI models continue to learn and evolve. Training data, intermediate outputs, and even model behavior can fall under regulatory scrutiny. This means that sovereignty is not limited to where data is stored. It extends to how it is processed, how it moves, and how decisions are made based on it.

As a result, AI data sovereignty is becoming a foundational consideration for enterprise AI strategies. It influences everything from infrastructure architecture to vendor selection, and it shapes how organizations approach risk.

How global regulations are changing AI deployment

Regulatory frameworks are rapidly evolving to address the unique challenges introduced by AI. Established laws such as the European Union’s General Data Protection Regulation (GDPR) already set strict guidelines for data protection and privacy. These requirements include clear rules around data handling, consent, and cross-border data transfers.

At the same time, newer regulations are emerging with a more direct focus on AI. The EU’s AI Act introduces additional layers of accountability, particularly for high-risk AI systems. It emphasizes transparency, traceability, and the ability to audit how models are trained and deployed.

These developments point to a broader shift. Regulators are no longer focused solely on protecting data at rest. They are also concerned with how data is used within AI systems, how models make decisions, and whether those processes can be explained and verified.

For organizations, this creates a more complex compliance landscape. It’s no longer enough to ensure that data is stored in a specific region. You also need to understand how your AI infrastructure processes that data, whether those processes align with regulatory expectations, and how you can demonstrate compliance when required.

This is where infrastructure decisions begin to carry more weight. The way you design your AI environment can either simplify compliance or make it significantly more difficult.

Data residency vs. data sovereignty vs. localization

As compliance issues and challenges grow more complex, it is important to clarify a few commonly used terms. While they are often used interchangeably, they represent distinct concepts that have different implications for AI infrastructure.

Data residency refers to the physical location where data is stored. For example, an organization may choose to store its data in a specific country to meet local requirements or customer expectations. Residency focuses on storage location, but it does not necessarily address how data is accessed or processed.

Data sovereignty goes a step further. It considers not only where data is stored, but also which legal jurisdiction has authority over it. This includes who can access the data, who can process it, and what obligations apply to its use. Sovereignty introduces legal and governance considerations that extend beyond simple location.

Data localization is more restrictive. It requires that data be stored and processed within a specific geographic boundary, often with strict limitations on cross-border transfers. Localization laws are designed to ensure that sensitive data remains fully under the control of local authorities.

For AI workloads, these distinctions matter. A cloud provider may offer regional hosting that satisfies data residency requirements, but that does not automatically ensure full data sovereignty. If users access or process data outside of the intended jurisdiction, compliance risks can still arise.

Understanding these differences can help you make more informed decisions about where and how to deploy AI systems. It also highlights the importance of aligning infrastructure with regulatory intent rather than relying on surface-level compliance measures.

How on-premises AI workloads support compliance

One of the most persistent assumptions in AI is that innovation requires moving data to the cloud. That belief is often driven by the sheer infrastructure demands of training modern AI models, which require significant compute power, high-performance storage, and the ability to scale quickly. While cloud environments offer scalability and flexibility, however, they can introduce challenges when it comes to maintaining strict control over data.

On-premises AI training presents a different approach. By keeping data within your own infrastructure, you retain direct oversight of how it is stored, accessed, and processed. This makes it easier to enforce jurisdictional boundaries and align with data sovereignty regulations.

There are several practical benefits to this model. First, it simplifies compliance with localization requirements by ensuring that data does not leave a defined geographic region. Second, it enhances auditability. When AI workloads run on-prem, you can maintain detailed logs of data access, processing activities, and model behavior, all within a controlled environment.

Security also becomes more straightforward to manage. You can implement consistent encryption standards, access controls, and monitoring practices without relying on third-party environments that may operate under different regulatory frameworks.

In addition, on-prem AI training supports clearer data governance. You have full visibility into the lifecycle of your data, from ingestion to model training to output generation. This visibility is critical when responding to regulatory inquiries or conducting internal audits.

While cloud-based AI will continue to play a role in many organizations, there is a growing recognition that certain workloads, particularly those involving sensitive or regulated data, are better suited to on-prem environments. This shift reflects a broader need for control and accountability in an increasingly regulated landscape, and it is driving interest in approaches that can bring AI capabilities closer to the data without sacrificing performance or scalability.

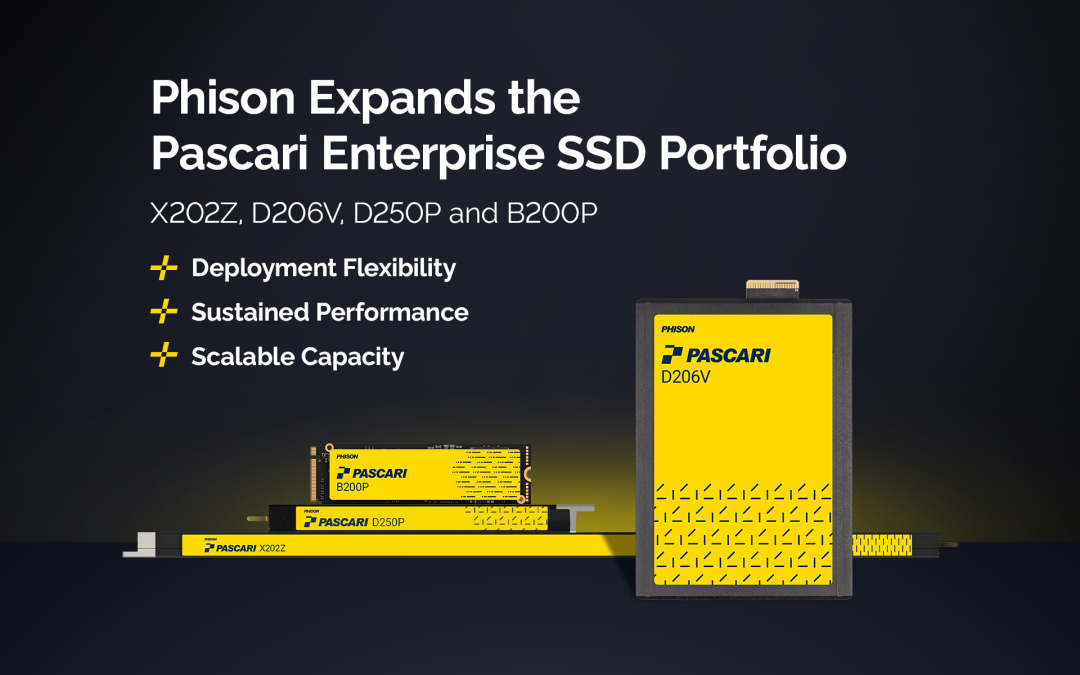

Where Phison’s Pascari aiDAPTIV™ fits in sovereign AI infrastructure

As organizations look to align AI innovation with regulatory requirements, infrastructure solutions must support both performance and control. This is where Phison’s approach to AI infrastructure becomes relevant.

Pascari aiDAPTIV is a cost-effective platform that enables organizations of all sizes and budgets to run AI workloads locally and train and deploy models within their own environments. By keeping training data on-prem, it helps maintain clear jurisdictional boundaries and supports compliance with data sovereignty regulations.

This approach also strengthens auditability. With AI workloads running locally, you can track data flows, monitor access, and maintain detailed records of how models are trained and used. These capabilities are essential for demonstrating compliance with frameworks such as GDPR and emerging AI-specific regulations.

Another advantage is the ability to align infrastructure with existing data governance policies. Rather than adapting processes to fit external environments, you can build AI workflows that integrate seamlessly with your internal controls and security practices.

Performance remains a key consideration as well. aiDAPTIV is designed to support efficient AI training without requiring large-scale cloud resources. This makes it possible to achieve strong performance while maintaining control over sensitive data.

Ultimately, the goal is to remove the trade-offs that often exist between innovation and compliance. By enabling on-prem AI training with strong governance and audit capabilities, aiDAPTIV can help you move forward with confidence.

Bring AI closer to your data

AI and data sovereignty are becoming deeply interconnected. As regulations continue to evolve, it’s important to take a more deliberate approach to how you design and deploy AI systems.

This starts with understanding the regulatory landscape and the specific requirements that apply to your data. It also requires a clear view of how your infrastructure supports or limits your ability to meet those requirements.

On-prem AI training with solutions such as aiDAPTIV is emerging as a practical path forward for many organizations, particularly those operating in regulated industries. It offers a way to maintain control, enhance auditability, and align with jurisdictional expectations without slowing down innovation.

As you evaluate your AI strategy, it’s worth taking a closer look at how your infrastructure decisions impact your regulatory posture. With the right approach, you can navigate global regulations with confidence while continuing to unlock the value of AI.

To learn more about how Pascari aiDAPTIV supports sovereign AI infrastructure and compliant on-prem training environments, visit our website or contact us.

Frequently Asked Questions (FAQ) :

What is data sovereignty in AI systems?

Data sovereignty refers to the requirement that data is governed by the laws of the country where it is stored and processed. In AI systems, this extends beyond storage to include training datasets, inference outputs, and operational logs. Enterprises must ensure that all stages of AI workflows comply with jurisdictional regulations, especially when handling sensitive or regulated data.

Why is data sovereignty critical for AI deployment?

AI models depend on large datasets, often containing sensitive information. If data crosses jurisdictions, organizations risk losing control over compliance and governance. Sovereignty ensures that data handling aligns with legal frameworks, reducing exposure to regulatory penalties and strengthening audit readiness.

How do regulations like GDPR and the EU AI Act impact AI infrastructure?

These frameworks require transparency, traceability, and accountability in AI systems. Organizations must demonstrate how data is processed, how models are trained, and how decisions are made. This shifts compliance from simple data storage requirements to full lifecycle governance of AI operations.

What is the difference between data residency, sovereignty, and localization?

Data residency focuses on where data is stored. Data sovereignty adds legal control and governance over access and processing. Data localization enforces strict geographic boundaries for both storage and processing. For AI, sovereignty is the most comprehensive requirement because it covers end-to-end data handling.

Can cloud-based AI environments meet sovereignty requirements?

Cloud platforms can support data residency through regional hosting, but sovereignty depends on how data is accessed and processed. Cross-border access, third-party control, and shared infrastructure can introduce compliance risks. Organizations must evaluate whether cloud configurations meet full regulatory expectations.

How does Pascari aiDAPTIV™ support data sovereignty?

Pascari aiDAPTIV enables on-prem AI training, ensuring data remains within controlled environments. This approach enforces jurisdictional boundaries, reduces reliance on third-party infrastructure, and aligns AI workflows with internal governance policies. It supports compliance without compromising performance.

What advantages does on-prem AI provide for auditability?

On-prem deployments allow full visibility into data flows, access controls, and model behavior. Enterprises can maintain detailed logs and enforce consistent security policies. This simplifies regulatory audits and provides verifiable evidence of compliance across AI operations.

How does aiDAPTIV balance performance with compliance?

aiDAPTIV is engineered for high-performance AI workloads without requiring hyperscale cloud infrastructure. Its controller-level optimization enables efficient training and inference while maintaining low-latency data access and strict governance controls within local environments.

Can aiDAPTIV integrate with existing enterprise data governance frameworks?

Yes. aiDAPTIV is designed for seamless integration with enterprise IT environments. Organizations can align AI workflows with existing encryption standards, access policies, and compliance frameworks, ensuring consistent governance across all data operations.

Why should enterprises consider on-prem AI for regulated workloads?

Regulated industries require strict control over sensitive data. On-prem AI eliminates uncertainties related to third-party environments, ensures compliance with localization and sovereignty laws, and provides predictable governance. Solutions like aiDAPTIV enable organizations to scale AI initiatives while maintaining full regulatory alignment.