Agentic AI workloads demand more memory than traditional AI, especially when running locally. As models grow and agents maintain long-running state, memory becomes the primary bottleneck. This article explains how aiDAPTIV extends effective AI memory to enable larger, more capable models to run reliably on practical systems.

Persistent, tool-using AI agents are moving into real workflows. AMD has coined a new device category for this moment, the “Agent Computer.” NVIDIA announced NemoClaw, an open-source security and privacy layer built on top of OpenClaw that adds policy-based guardrails for enterprise deployments. Everyone is talking about what agents can do. Far less attention is going to what it takes to run capable agentic AI well on local systems.

That matters because agentic workloads raise the bar. They do more than answer a single prompt. They plan, use tools, keep state over time, and work across multiple steps. For that kind of work, model quality matters more, which often pushes developers toward larger, more capable models.

Why local agentic AI matters

The case for running AI agents locally is straightforward. Local deployment keeps sensitive data on-device, avoids cloud inference costs that scale with usage, reduces latency for interactive workloads, and gives developers more control over the model and its behavior. For enterprises handling proprietary data, and for OEMs building always-on agent experiences, local inference is often a requirement, not just a preference.

The problem is that many developers still rely on the cloud for capable agentic AI for a simple reason: stronger models are easier to run there.

For many local systems, the bottleneck is not compute alone. It is memory. GPU VRAM is limited. System DRAM or unified memory is limited. When memory runs short, local deployments often fall back to smaller models, tighter limits, or more aggressive quantization.

Those tradeoffs may help a model fit, but they can also reduce reliability in multi-step reasoning, tool use, and longer-running tasks. In other words, local agentic AI often settles for smaller models not because smaller models are ideal, but because memory limits force the compromise.

What makes agentic workloads harder

An AI agent is more than a chatbot. Instead of answering one prompt and stopping, it can keep state over time, use tools, check external systems, and take actions on your behalf.

That changes the memory problem.

Most chatbot interactions are relatively short-lived compared with agent workflows. Agents are different. They maintain persistent session state, manage long context windows, monitor changing data sources over time, and orchestrate multiple tools simultaneously. That means they often need to keep more state available for longer.

For developers building more complex workflows, it may not be just one agent. A coding agent tied to a repo, a research agent monitoring data sources, and a writing agent managing long-form context each bring their own session state and tools, multiplying memory demand quickly.

Local hardware hits a memory wall

A large local model at 4-bit quantization can require dozens of gigabytes just for weights. A high-end consumer GPU may have 24 GB of VRAM. The math gets difficult before you account for KV cache, runtime overhead, or agent state.

That is why local agentic AI often gets pushed toward smaller models. The issue is not just whether a model can launch. It is whether a more capable model can run reliably enough to support persistent, tool-using, multi-step workloads on practical client hardware.

Without a solution, developers and OEMs face hard tradeoffs: truncate context and lose coherence, accept latency spikes when data spills into slower tiers, or require expensive high-memory GPUs that price many users out of the market. None of these are ideal if agent PCs are supposed to reach mainstream adoption.

How Phison’s Pascari aiDAPTIV™ extends AI memory

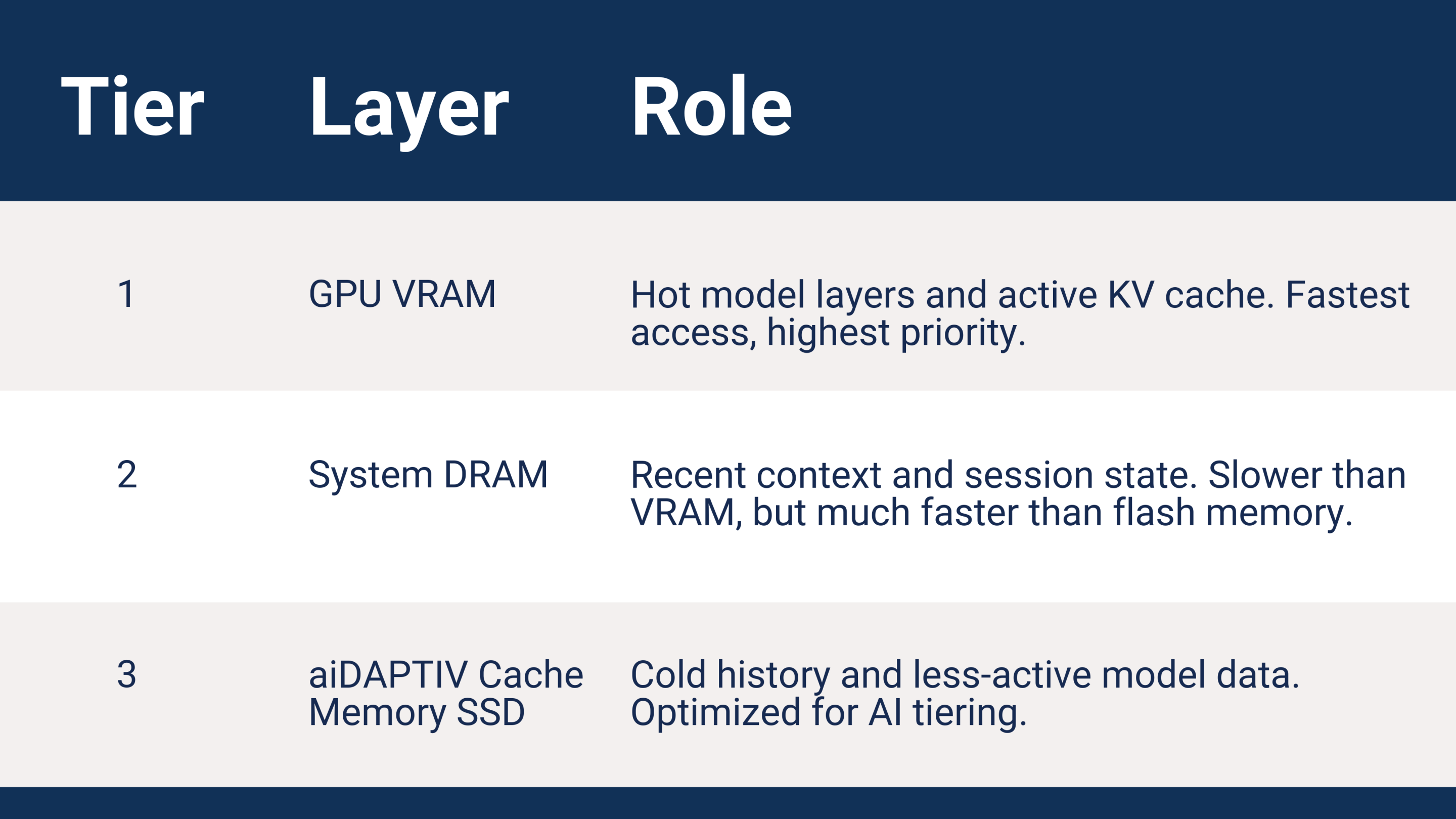

aiDAPTIV addresses these challenges by extending effective AI memory across GPU memory, system DRAM, and flash memory, creating a memory hierarchy that helps larger models run on more practical systems without requiring developers to manage each tier manually.

Hot data stays in VRAM. Warm data stays in DRAM, ready for fast reuse. Colder data can tier to an aiDAPTIV Cache Memory SSD instead of being discarded outright. This does not eliminate latency tradeoffs, but it can make larger and longer-running agent workloads practical on systems that would otherwise hit memory limits much sooner.

How aiDAPTIV runs larger MoE models locally

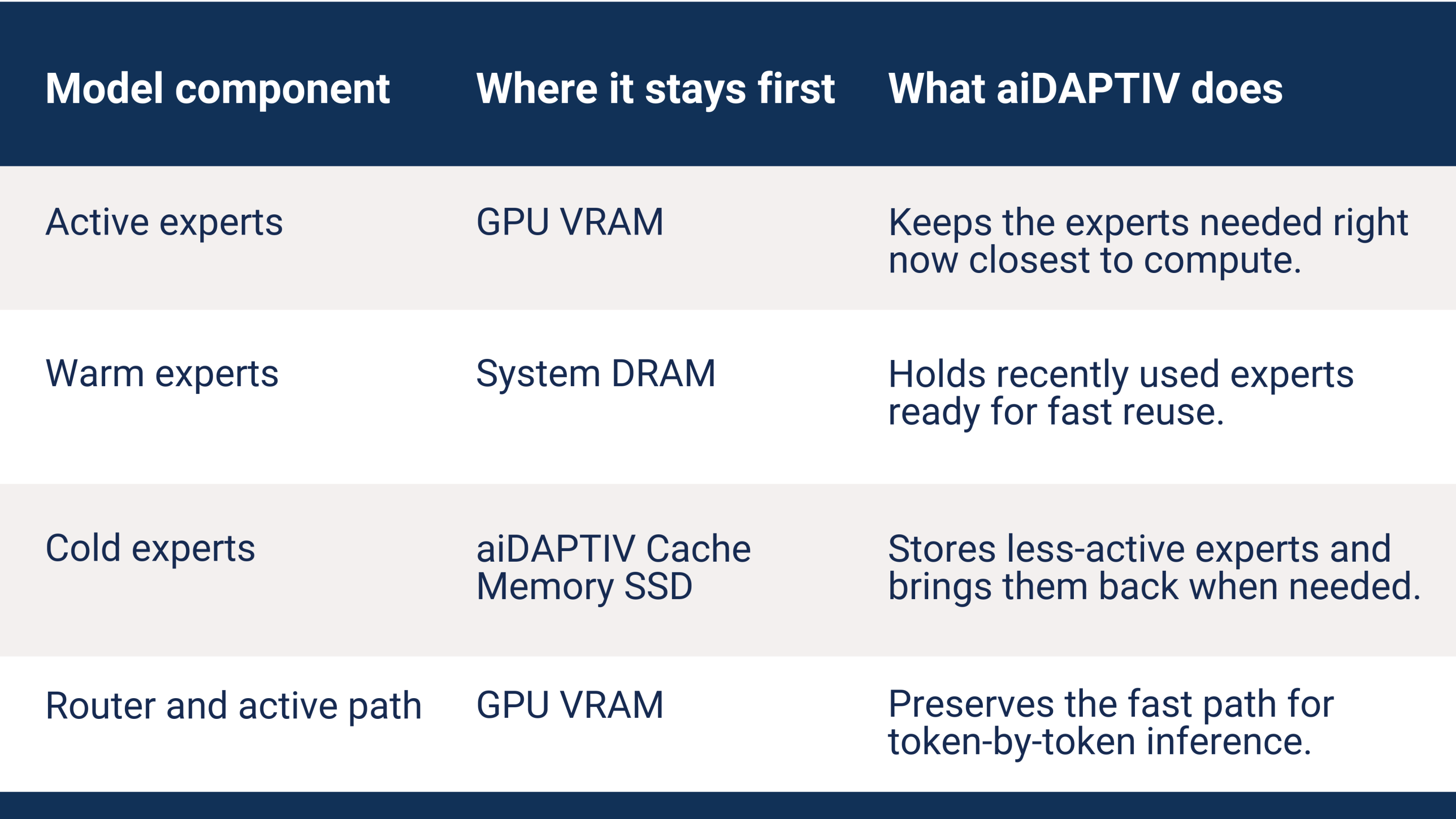

In a Mixture of Experts model, only a subset of experts is active for each token. aiDAPTIV helps keep the active or recently used experts closer to compute, while less-active experts can tier to lower-cost memory.

The router selects the experts needed for the current token. Active experts stay in GPU memory for immediate execution. Recently used experts can remain in system DRAM for fast reuse. Less-active experts can tier to aiDAPTIV Cache Memory instead of forcing the model down to a smaller configuration. When one of those experts is needed again, aiDAPTIV helps bring it back into a faster tier.

aiDAPTIV’s dynamic MoE offloading integrates with llama.cpp, making this capability available through a standard inference API endpoint.

GTC 2026: Running a 120B model on a laptop

At GTC 2026, Phison demonstrated a simple OpenClaw app on an Acer laptop with an NVIDIA® GeForce RTX™ 5090 GPU, 24 GB of VRAM, and 64 GB of system DRAM. With aiDAPTIV, the system ran gpt-oss-120B locally, using MoE expert offloading to extend effective memory, at approximately 15 tokens per second. According to OpenAI, gpt-oss-120B requires a single 80 GB GPU to run natively, more than three times the VRAM available on the demo system. That is the tradeoff aiDAPTIV changes. Instead of forcing local agentic AI to settle for a smaller model, it helps larger, more capable models run on practical client hardware.

Note: OpenAI’s model card lists 80 GB for single-GPU deployment. Demo throughput was approximately 15 tok/s, or approximately 5–6 tok/s with KV cache reuse enabled.

What this means for device makers

OEMs building agent PCs today face a difficult choice: spec a GPU with enough memory to handle larger agent workloads, which raises cost and limits the addressable market, or ship with constrained memory and accept reduced capability. Neither is an ideal product story.

aiDAPTIV changes that equation. A mid-range or memory-constrained client system paired with fast DRAM and aiDAPTIV Cache Memory can extend effective memory, making larger agent workloads practical without requiring much-higher-memory GPUs.

That matters especially in notebooks and other lower-memory client systems. As integrated GPUs and client GPUs become more capable, memory remains one of the main barriers to running serious agentic workloads locally. Intelligent memory tiering helps make longer-running, more capable agents practical on thinner, lower-cost systems that would otherwise hit those limits much sooner.

The gap aiDAPTIV fills

The shift to agentic AI exposes a practical problem underneath the excitement: stronger local agents need stronger models, and stronger models need more memory. That memory-elasticity layer remains a real gap in the local AI stack. That is the problem aiDAPTIV is built to solve.

Work with Phison

AI memory is often the bottleneck for local agentic AI. Contact us to find out how aiDAPTIV can help.

Frequently Asked Questions (FAQ) :

What is agentic AI and how is it different from traditional AI models?

Agentic AI refers to systems that go beyond single-response interactions. These agents plan tasks, use external tools, maintain memory across sessions, and execute multi-step workflows. Unlike traditional chatbots, they require persistent state and longer context handling, increasing both compute and memory demands.

Why is memory a critical bottleneck for local AI deployment?

Local systems are constrained by GPU VRAM and system DRAM capacity. Advanced models require tens of gigabytes for weights alone, not including runtime overhead like KV cache and agent state. When memory is insufficient, developers must reduce model size or performance, limiting real-world usability.

Why do enterprises prefer running AI locally instead of in the cloud?

Local AI deployment ensures data sovereignty, reduces inference latency, eliminates recurring cloud costs, and provides full control over model behavior. For enterprise IT environments handling proprietary data, local inference is often a compliance and security requirement.

What challenges do agentic workloads introduce compared to standard inference?

Agentic workloads require continuous memory allocation for session state, tool orchestration, and long context retention. Multiple concurrent agents can amplify memory demand, making traditional hardware configurations insufficient without optimization.

Why are larger models necessary for agentic AI?

Larger models generally deliver better reasoning, planning, and tool-use capabilities. These attributes are essential for reliable multi-step workflows. However, their memory requirements make them difficult to deploy on standard client hardware.

How does Phison aiDAPTIV™ improve local AI performance?

aiDAPTIV introduces a hierarchical memory architecture that dynamically distributes workloads across GPU VRAM, system DRAM, and SSD-based cache. This approach expands effective memory capacity without requiring manual data management, enabling larger models to run efficiently on constrained systems.

What role does aiDAPTIV play in Mixture of Experts (MoE) models?

aiDAPTIV optimizes MoE execution by keeping active experts in VRAM, recently used experts in DRAM, and less-active experts in SSD cache. This dynamic tiering ensures that only necessary components remain in high-speed memory, improving efficiency without reducing model size.

How does aiDAPTIV enable large model deployment on consumer hardware?

By offloading inactive model components to lower-cost memory tiers, aiDAPTIV allows systems with limited VRAM to run models that would traditionally require high-end GPUs. This significantly lowers hardware barriers for local AI deployment.

What was demonstrated at GTC 2026 using aiDAPTIV?

Phison demonstrated a 120B parameter model running locally on a laptop with a 24 GB GPU. The system achieved approximately 15 tokens per second using aiDAPTIV’s memory tiering and MoE offloading, proving that large-scale models can operate on practical hardware.

How does aiDAPTIV benefit OEMs and enterprise system builders?

aiDAPTIV enables OEMs to design AI-capable systems without over-provisioning expensive GPU memory. It supports scalable, low-latency, and AI-ready architectures, making it possible to deliver enterprise-grade agentic AI performance in cost-efficient devices.